As I reach my 20 year anniversary with Intel today, I reflect upon advice that resonates with me. I especially like the posting

http://perspectives.mvdirona.com/2016/11/advice-to-someone-just-entering-our-industry/, including the admonition to "Play the Long Game."

20 years. And all doing firmware. Several different firmware architectures and many instances of EFI-style firmware (e.g., Release 1-Release 8.1/2/3/4/5/6, Release 9 "aka EDKII").....

Hopefully this won't encourage me to abuse logical fallacies like argument from authority, saying 'In my 20 years at Intel we.....' Instead you're only as good as the last game you've played, not your record of games.

Or having a Whiggish view of tech history. Instead it's more Kolmogorov probability that monotonically increasing (or decreasing) progress and determinism.

Speaking of history, my original badge from February 24, 1997 can be found below, with the drop-e logo and, gasp, a suit and tie.

And now

Ah, the thick head of hair that I had in the 90's. And my Harry Potter glasses. I recall visiting Shanghai and Suzhou in '01. In the latter city the locals pointed at me in those crazy glasses and a scratch on my forehead from my two year old daughter (that resembled the lightning bolt), reinforcing the Potter doppelganger experience. Pre-SARs in Shanghai, so it was still possible to eat snake, drunken shrimp, and dining colleague from the south China province whose restaurant jaunt truly lived up to the saying "... the Chinese eat anything with four legs except a table, and anything that flies that isn't an airplane..."

http://simonlesser.blogspot.com/2009/06/food.html.

So my journey at Intel started in 1996 after contact from an Intel recruiter while I lived in Houston,TX. He exhorted me to join Intel, especially given the 'imminent' Merced CPU development. I interviewed in Hillsboro, OR in October 1996 and was told that I could go to Oregon for IA32 Xeon, or DuPont, WA for IA-64 Merced. Having grown up in Houston, Texas and not realizing that the Pacific Northwest even existed prior to this conversation, I naturally chose DuPont in order to be part of the 64-bit revolution.

Fast forward to February 1997. My wife and I moved to Olympia, WA. Given some of the, er, delay in Merced, I had the opportunity to pick up a Masters at the University of Washington

and at the same time work on developing getting our Itanium firmware ready. This included working on the System Abstraction Layer (SAL)

http://www.intel.com/content/www/us/en/processors/itanium/itanium-system-abstraction-layer-specification.html with my BIOS hero/guru Sham D. in Hillsboro, along with Mani and Suresh in Santa Clara. The original boot flow entailed SAL-A for memory initialization, SAL-B for platform initialization and the "SAL_PROC" for the OS-visible API's to enable boot-loaders. The loader API into the firmware was a direct mapping of the PC/AT BIOS calls, with examples including instances like SAL_PROC 0x13 having a similar command set to int13h

https://en.wikipedia.org/wiki/BIOS_interrupt_call.

As an arbitrary pedantic sidebar, you definitely see a pattern in firmware for 'phases' that typically include 'turn on memory,' 'turn on platform', and 'provide the boot loader environment.' Itanium had SAL-A, SAL-B, EFI. UEFI PI has SEC, PEI, DXE, BDS/TSL/UEFI API's. coreboot has bootblock, rom stage, ram stage, payload (including Seabios or UEFI or Depthcharge or ...). Power8 has hostboot, skiboot, and Petitboot (or EDKII UEFI). The workstation BIOS for IA-32 below had VM0, VM1, VM2, Furball. PC/AT BIOS has bootblock, POST, BIOS runtime. You see a pattern here?

Writing SAL_PROC code was pretty exciting. It could be invoked in virtual or physical mode. With hand-crafted Itanium assembly it was pretty reasonable to write position independent code (PIC) and use the GP register to discern where to find global data. But in moving to C, writing portable C code to abstract the SAL services was quite a feat. This is distinct from the UEFI runtime where were are callable in 1:1 mapping and then non-1:1 after the invocation of the SetVirtualAddress call by the OS kernel.

Regarding gaps with SAL_PROC as a boot firmware interface, as chronicled in page 8 of

http://www.intel.com/content/dam/www/public/us/en/documents/research/2011-vol15-iss-1-intel-technology-journal.pdf, Intel created the Intel Boot Initiative (IBI) as a C-callable alternative. The original IBI specification looked a lot like ARC

http://www.netbsd.org/docs/Hardware/Machines/ARC/riscspec.pdf. Ken R., a recent join to Intel from an MS (where he had a lot of DNA for ACPI), helped turn IBI into what we know as EFI 1.02, namely evolving IBI to have discoverable interfaces like protocols (think COM IUnknown::QueryInterface) and Task Priority Levels (think NT IRQLs), and of course the Camelcase coding style and use of CONTAINING_RECORD macro for information hiding of private data in our public protocol interface C structures. Many thanks to Ken.

Building out EFI was definitely evolutionary. It started from the 'top down' with EFI acting as that final phase/payload in the first instances with alternative platform initialization instances underneath. This view even informed the thema of 'booting from the top down' that informed how we sequenced the chapters in the 2006 Beyond BIOS book, for example. The initial usage of EFI was the 'sample implementation' built on top of the reference SAL code and a PC/AT BIOS invoked by the EFI 'thunk' drivers.

As we moved into the 2000's, the Intel Framework Specifications were defined in order to replace the SAL for Itanium and PC/AT BIOS for Itanium and IA-32, respectively. We internally referred to things like SAL + BIOS + EFI Sample as a "Franken-BIOS." The associated code base moved from the EFI Sample to the EFI Developer Kit, or EDKI, to distinguish it from the EDKII done in the later 2000's. This internal code-base was called 'Tiano', thus the name of community sites like

http://www.tianocore.org. Someone said the name came from the sailor with Columbus who first noticed America, but the only citation I could find publicly is the "Taino" tribe with whom Columbus engaged.

As a funny sidebar, I do recall the meeting where someone found "Tiano Island"

http://pf.geoview.info/tiano,4033365 on the web. At the time it cost some number of millions of dollars. The original director of our team, numbering just a few engineers in the room, said 'let's each pool a couple percent of our stock options and buy the island.' I guess Stu had a much more significant equity position than I did, as a lowly grade 7 engineer.

In late 90's at DuPont, SAL and EFI sample were not the only code base activities. While in DuPont the erstwhile workstation group also created a clean-room replacement for the early boot flow. This started on IA32 and the OS interface was the PC/AT BIOS. For this effort we didn't have an image loader and instead just used non-1:1 GDT settings in order to run the protected mode code. For booting the protected mode code provisioned the 16-bit BIOS blob with information like the disk parameters, etc, so that the 16-bit code was just the 'runtime interface.' The 16-bit BIOS blob was called the 'furball' since we hoped to 'cough it up' once the industry had transitioned into a modern bootload erenvironment, such as EFI.

I still recall colleagues in the traditional business units yelling 'you'll never pass WHQL' with the above solution, but it did work. In fact, the work informed the subsequent interfaces and development with the Intel Framework Compatibility Support Module

http://www.intel.com/content/www/us/en/architecture-and-technology/unified-extensible-firmware-interface/efi-compatibility-support-module-specification-v096.html.

We then ported the workstation code to boot the first Itanium workstation. I left that effort and joined the full EFI effort afterward. I recall the specific event which precipitated the decision. I was chatting with Sham and the workstation BIOS lead in the latter's cube. The lead said 'Now that we have our BIOS in modular code code "Plug-In Modules" (PIM's) we can tackle the option ROM problem. I thought to myself that just refactoring code into separate entities isn't the challenge in moving from PC/AT 16-bit option ROM's into a native format, it's all about the 'interfaces, namely how would a 'new' option ROM snap into a modern firmware infrastructure. IBI (now called EFI) was on that path to a solution, whereas a chunk of 'yet another codebase with PIM's' wasn't. Thus I was off to chatting with my friend Andrew, then lunch with Mark D, and onto the EFI quest in 1999. Quite the firmware long-game.

Next we're of finishing the first EFI, going from IBI to EFI .98 to EFI 1.02.

Next we're off on a cross-divisional team to create the '20 year BIOS replacement' called Tiano and the Intel Framework Specifications are born.

Next we solve the option ROM and driver problem with EFI 1.10. Along the way between 1.10 and UEFI 2.0 we incubate a lot of future technology with the never release 'EFI 1.20' work.

Next Andrew Fish and I ported EDK to Intel 64. And I had fun with a port to XScale back in 2001. I have always enjoyed firmware bring-up on new CPU's.

Fast forward to 2005. The EFI specification became the UEFI 2.0 specification, and many of the Intel Framework Specifications became the UEFI Platform Initialization specification. Wrote the first EFI interface and platform spec for TPM measured boot

https://github.com/vincentjzimmer/Documents/blob/master/EFIS004Fall06.pdf.

Fast forward to the 2010's. More open source. More device types. More CPU ports. Continue to evolve network booting, such as IPV6

https://tools.ietf.org/rfc/rfc5970.txt and HTTP

https://github.com/vincentjzimmer/Documents/blob/master/UEFI-Recovery-Options-002-1.pdf. Good stuff. Helped deliver UEFI Secure Boot

https://github.com/vincentjzimmer/Documents/blob/master/SAM4542.pdf https://github.com/vincentjzimmer/Documents/blob/master/UEFI-Networking-and-Pre-OS-Security.pdf.

In parallel, I often had side firmware engagements, including a fun tour of duty helping our the solid state disk (SSD) team on firmware.

I still believe in better living through tools, too, whether they have landed in the community

http://www.uefi.org/sites/default/files/resources/2014_UEFI_Plugfest_04_Intel.pdf, almost made it

https://github.com/vincentjzimmer/Documents/blob/master/Vij_KRHZRWL_13.pdf https://github.com/termite2/Termite, or are in incubation

https://www.usenix.org/system/files/conference/woot15/woot15-paper-bazhaniuk.pdf.

Fast forward to 2017. Year 20. It's still a lot of fun solving crossword puzzles with hardware and firmware.

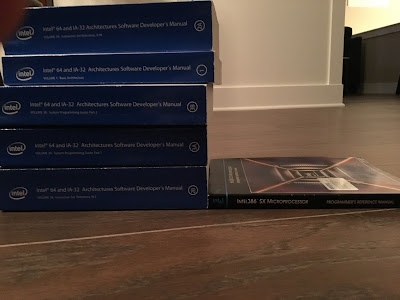

During my time at Intel I've also appreciated the wisdom of others, whether through the mentoring of direct interaction or the written word. For the latter I heartily recommend keeping the following close at hand.

So am I done this morning? Let's do a final rewind to February 1992 when I jumped into industry in Houston. First I wrote firmware for embedded systems attached to natural gas pipelines

http://www.emerson.com/resource/blob/133882/bb9c3232256dfab98cc6a20a27d43c1f/document-3-9000-309-data.pdf - sensors, serial protocols with radio interfaces to SCADA host, control algorithms, I2c pluggable expansion cards, loaders in microcontroller mask ROM's, porting a lot of evil assembly to C code... fun stuff. The flow computer/Remote Telemetry Unit (RTU) work was an instance of the Internet of Things before the IOT was invented. Then on to industrial PC BIOS and management controller firmware. Then on to hardware RAID controllers and server BIOS. And then Intel in February 1997. 5 years of excitement in Houston prior to my Intel journey.

So I guess that sums out to 25. Now I feel tired. Time to stop blogging and playing the rewinding history game. Here's looking to the next 25.

Cheers.